Drop-downs masquerade as textboxes. Checkboxes come pre-checked. Search bars are secretly buttons. A government portal in Delaware goes offline at night. Sometimes a form tells you to call the IRS or send a fax to proceed.

Suchintan Singh and Shuchang Zheng didn't set out to catalog the web's hostility toward automation. They kept running into it. Singh had built ML platforms at Faire and Gopuff, infrastructure for search and discovery at two marketplaces where systems are supposed to behave predictably. Zheng spent five years on developer tooling: a simulation platform and testing framework at Lyft used by over a thousand engineers, then payment infrastructure at Patreon processing 20M+ transactions monthly. Both learned how often predictable systems aren't.

Their first startup together was an engineer onboarding tool. It didn't take. Their second, Wyvern, was an open-source ML platform for marketplace revenue. They launched it on Hacker News. Ten upvotes. Never left the "newest" filter. But across both attempts, the web automations they tried to build around their products kept breaking. The underlying task hadn't changed. A site redesigned, a popup appeared, an element that looked like one thing behaved like another.

"Requirements for automations are ambiguous at best, and misleading at worst — even humans struggle to define them clearly."

The response, Skyvern, started from a blunt architectural bet: stop reading code. Most agents fail because they try to parse HTML full of obfuscation and dynamic rendering. Screenshot the page instead, use a vision model to find what looks like a checkout button. If the HTML changes but the visual interface stays recognizable, the automation survives. A Validator checks the screen after every action to confirm it actually worked, because actions fail silently all the time: a popup blocks a click, a CAPTCHA appears after submit, a page loads but nothing changes.

Necessary groundwork. The architecture kept going.

Keeping an LLM in the loop for every click is expensive and non-deterministic. So Skyvern's explore-then-replay pattern has the agent reason through a workflow once, then compile that reasoning into deterministic code for subsequent runs. Plain Playwright, no model. When the compiled code breaks, the system doesn't guess a new selector. It falls back to the intention it captured during the original exploration, asks the model how to accomplish that same goal on the changed page, and recompiles. The recovery unit is "select legal structure: Corporation," not "click the third button." The relationship between a user's input and the available options on a given page doesn't exist ahead of time. It has to be discovered at runtime, every time the surface shifts.

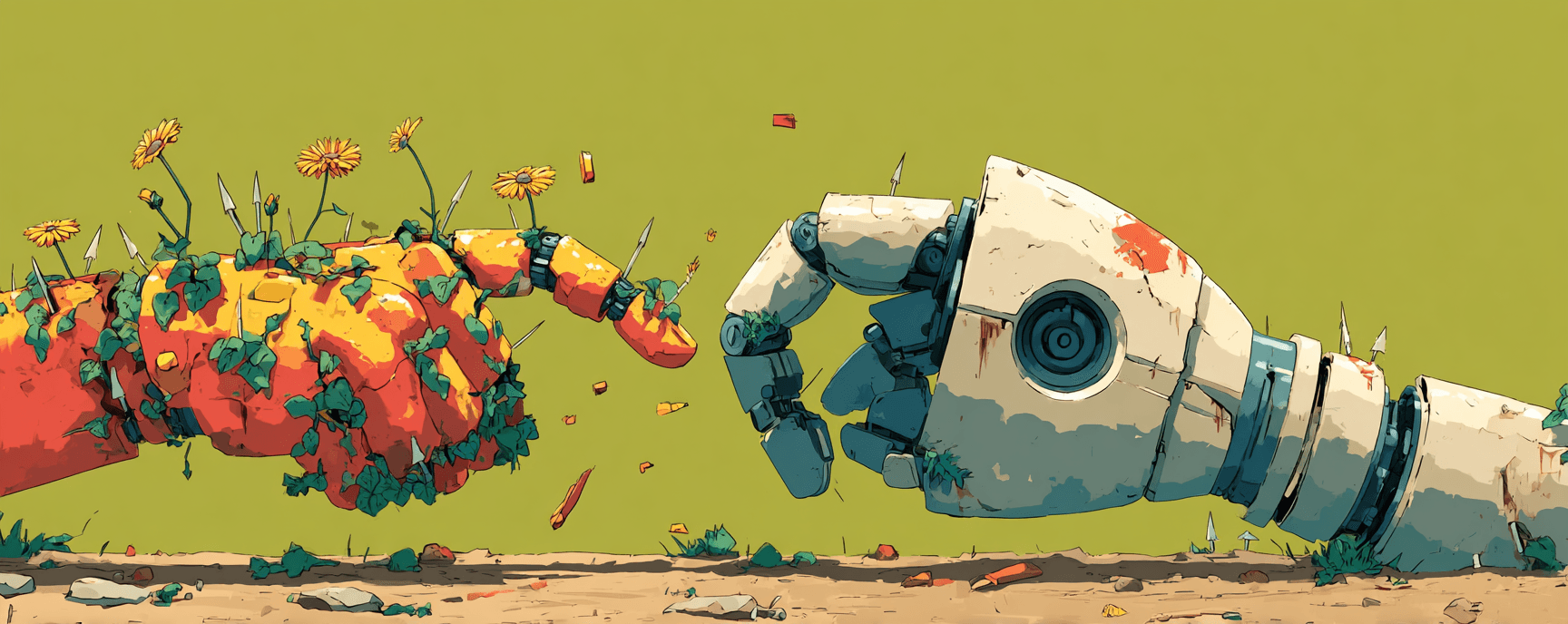

The adversarial framing is concrete here. A dropdown is just a list of buttons to an agent. The mapping between what a user means and what the page offers is constructed in the moment, and the page has no obligation to make that construction easy. The web lies about its structure. It also, increasingly, lies about its content, serving different data to different visitors depending on how they arrive. Skyvern's architecture addresses the structural deception. The content deception remains largely unowned across the industry.

~8-person team in San Francisco, $2.7M in seed funding, open-source launch that hit #1 on Hacker News.

Two pivots earlier, Singh and Zheng had learned something more specific than what the Hacker News community kept circling. They could always build the automation. The environment kept tearing it apart.

Things to follow up on...

- Infrastructure vs. intelligence gap: Browserless.io's 2026 state of browser automation report frames the shift from scripts to AI-driven agents and why governance and observability are now the bottleneck, not model capability.

- Legacy systems as adversaries: A University of Washington analysis describes how Amazon's AGI Lab trains agents on high-fidelity simulations of legacy systems because the real behaviors of those systems, including quirks and silent failures, must be discovered rather than specified.

- Silent failures in production: A practitioner taxonomy documents how a single failed API call can lead an agent to hallucinate data rather than report an error, optimizing for completion over correctness.

- The 40% scrapped prediction: Gartner predicts that over 40% of agentic AI projects will be abandoned by 2027 not because models fail, but because organizations can't operationalize them past pilot stage.