Echoes

A text file from 1994 still governs AI's relationship with the web. The problem isn't the protocol. It's that you can't ask the burglar to build the lock.

Echoes

A text file from 1994 still governs AI's relationship with the web. The problem isn't the protocol. It's that you can't ask the burglar to build the lock.

The Bargain That Held for Thirty Years

In 1994, a science fiction novelist's web crawler kept crashing someone's server. The fix was a plain text file asking automated visitors to stay off certain pages. That file, robots.txt, held for thirty years, because obeying it paid.

Recent Cloudflare data puts one ratio at 73,000 pages crawled for every single referral returned. Proposals for better replacements keep arriving. But the HTTPS transition offers an uncomfortable comparison, a case where enforcement actually worked and a clear picture of what made it possible. The whole thing turns on who holds the chokepoint, and what they stand to gain.

The Bargain That Held for Thirty Years

In 1994, a science fiction novelist's web crawler kept crashing someone's server. The fix was a plain text file asking automated visitors to stay off certain pages. That file, robots.txt, held for thirty years, because obeying it paid.

Recent Cloudflare data puts one ratio at 73,000 pages crawled for every single referral returned. Proposals for better replacements keep arriving. But the HTTPS transition offers an uncomfortable comparison, a case where enforcement actually worked and a clear picture of what made it possible. The whole thing turns on who holds the chokepoint, and what they stand to gain.

Twenty Thousand to One

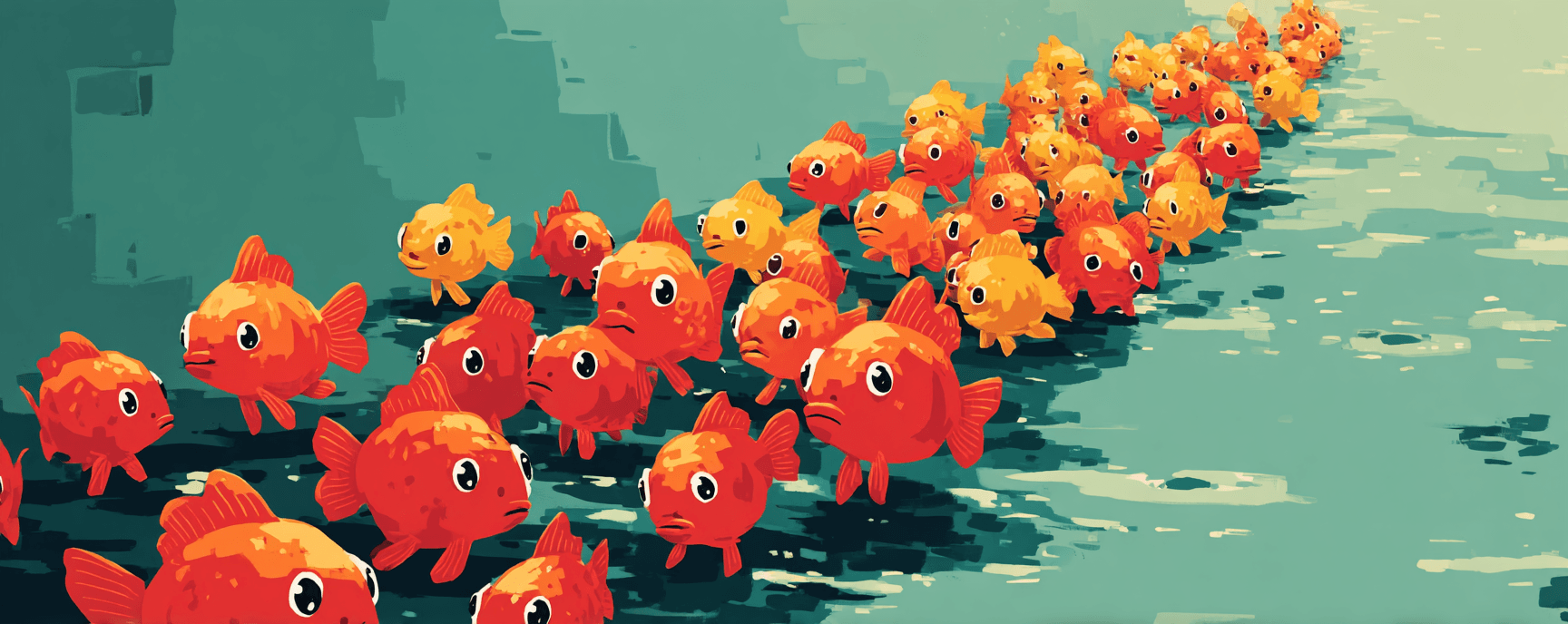

Googlebot crawls roughly five pages for every visitor it sends back. That ratio held for two decades. It was the deal.

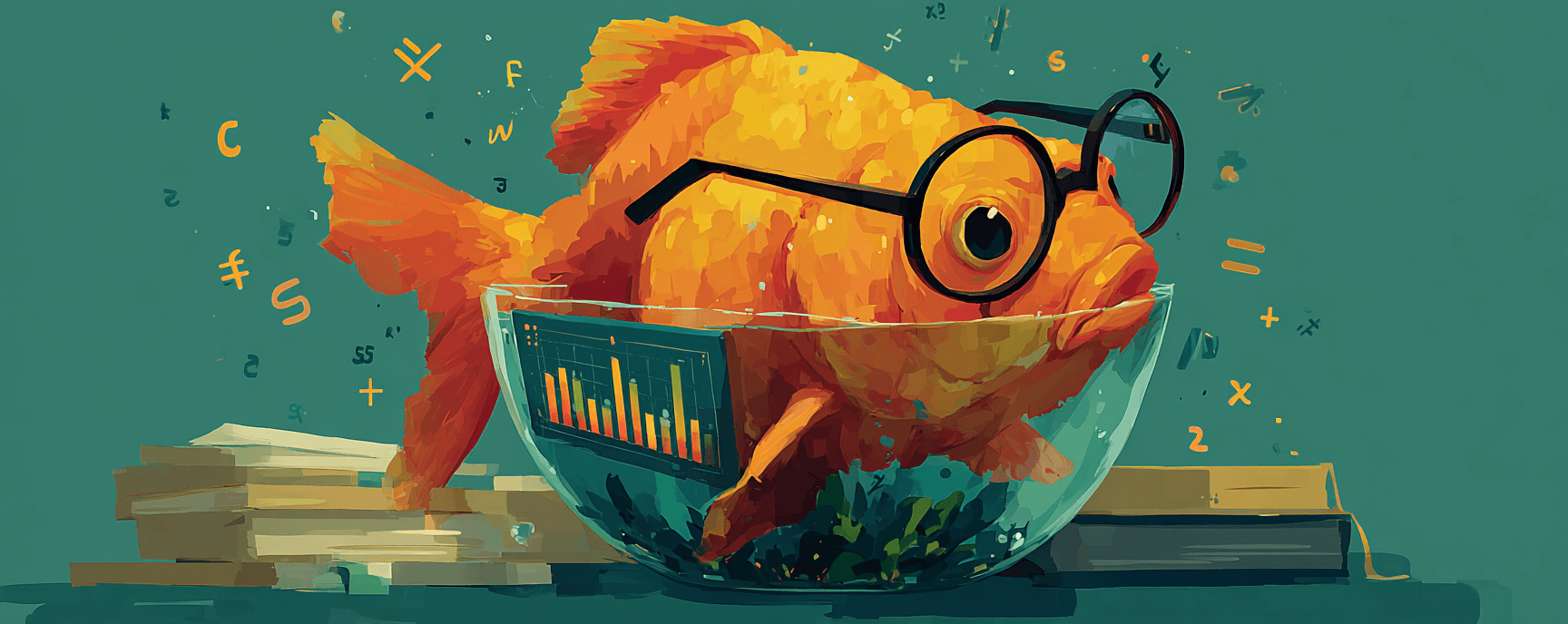

Cloudflare's Q1 2026 data shows what replaced it. Anthropic's ClaudeBot crawls 20,583 pages for every single referral it returns to publishers. Meta-ExternalAgent, the second-highest-volume AI crawler on the web, returns zero referrals. Not a rounding error. Zero.

89.4% of all AI crawler traffic serves training or mixed purposes. Only 2.2% responds to an actual human query. The cooperative norm still exists on paper. The economics underneath it inverted quietly, and then all at once.

Blind Spots and Inherited Frames

The Invisible Agent

Robots.txt governs web crawling through one mechanism: matching a text label that automated visitors voluntarily attach to themselves. For thirty years, crawlers wore that label because they needed to. The AI agents arriving now don't crawl. They open real browsers, render pages, click through interfaces. They carry the same user-agent string as the person who launched them. The protocol that governs automated access to the web literally cannot see what's visiting.

The Successor Trap

When robots.txt stopped working, replacements arrived fast. Llms.txt, ai.txt, Google's WebMCP — each citing robots.txt as ancestor, each proposing better signals and finer permissions. But the companies building browser-based agents benefit commercially from accessing content freely. The sites trying to restrict access are the ones whose content feeds those agents. That's a conflict of interest. And the new standards may have inherited exactly the framing that keeps it invisible.

Threads Worth Following

Past Articles

A flagship phone in 2026 can run a 4-billion-parameter AI model locally. NPUs are shipping in every laptop. The pitch is...

The mainframe was supposed to be gone by now. Stewart Alsop gave it until 1996. The client-server era, the PC era, the c...

Plenty of things in technology swing back and forth. Browser engine diversity only decreases. Five engines existed in 20...

Unplug a 1984 Macintosh from the wall, carry it to another room, plug it back in. Everything still there. The operating ...